Artificial Intelligence (AI) is no longer a concept from science fiction; it is a technology that actively shapes our daily lives, powering everything from search engines and language translation to generative art and autonomous vehicles. But this revolution isn't just about software. It's built on a foundation of highly specialized, incredibly powerful hardware: AI chips.

These chips, also known as AI accelerators, are the silent workhorses that make modern artificial intelligence possible.

What Are AI Chips and Why Do We Need Them?

For decades, the Central Processing Unit (CPU) was the "brain" of all computing. CPUs are brilliant generalists, designed to execute a wide variety of tasks one after the other (serially) at very high speeds. They are the master chefs of computing, capable of handling any complex recipe you give them.

However, the core mathematics of modern AI—specifically deep learning and neural networks—doesn't need a master chef. It needs a giant kitchen staff.

Neural networks operate by performing billions of relatively simple calculations (like matrix multiplication) all at the same time (in parallel). A CPU, trying to do these one by one, becomes a massive bottleneck. It’s simply not the right tool for the job.

This is where AI chips come in. They are designed from the ground up for one primary purpose: massive parallel processing.

The Key Players: Types of AI Accelerators

The term "AI chip" isn't one-size-fits-all. Different types of chips are optimized for different AI tasks.

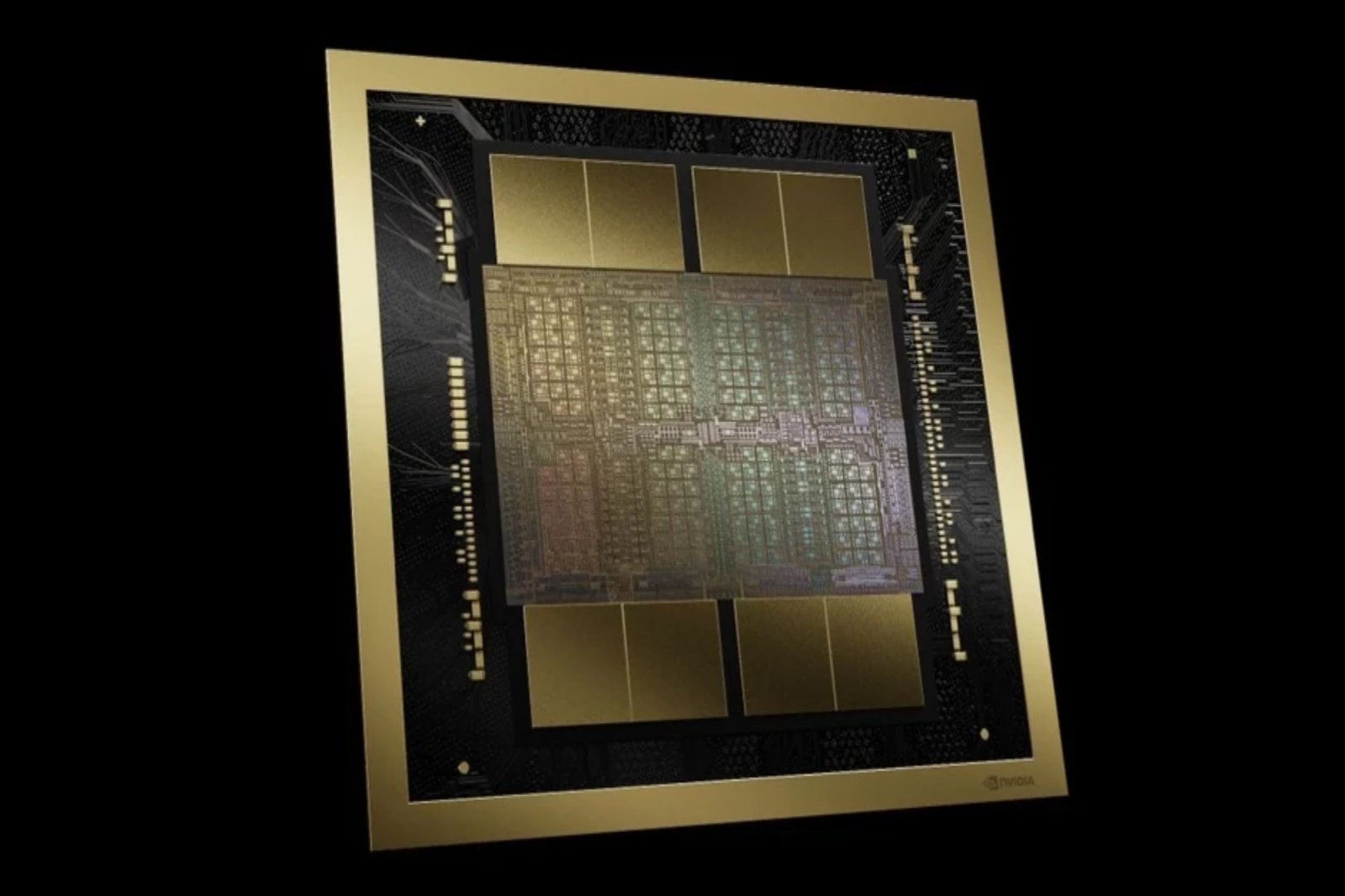

1. GPUs (Graphics Processing Units)

- What they are: Originally designed to render graphics for video games (which also requires massive parallel calculations to color millions of pixels at once), researchers discovered GPUs were almost perfectly suited for deep learning.

- Best for: Training. Companies like Nvidia dominate this market. Their architecture, with thousands of simple cores, is ideal for the heavy-duty number crunching required to train large AI models.

2. ASICs (Application-Specific Integrated Circuits)

- What they are: These are the ultimate specialists. An ASIC is a chip custom-built to do one specific task and nothing else. Because it’s hyper-specialized, it can be incredibly fast and power-efficient.

- Best for: Inference at scale. The most famous example is Google's TPU (Tensor Processing Unit), which is custom-designed to run Google's TensorFlow AI framework, powering services like Google Search and Translate.

3. FPGAs (Field-Programmable Gate Arrays)

- What they are: FPGAs are the chameleons of the chip world. Unlike ASICs, which are permanent, an FPGA's internal circuitry can be reconfigured and reprogrammed after it's manufactured.

- Best for: Flexibility. They offer a middle ground between the general-purpose CPU and the hyper-specific ASIC. This is useful for AI applications where the algorithms (models) are evolving quickly.

4. NPUs (Neural Processing Units)

- What they are: This is a more general term for processors optimized for neural network tasks, often found in consumer devices.

- Best for: On-device (Edge) AI. The "Neural Engine" in Apple's iPhones and M-series chips is a perfect example. It's an NPU designed to run AI tasks (like Face ID or photo processing) directly on your phone, without needing to connect to a cloud server. This is faster, more power-efficient, and better for privacy.

The Two Big Jobs: Training vs. Inference

To understand AI chips, it’s crucial to know the two main phases of an AI's life:

- Training: This is the "school" phase. An AI model (like GPT-4) is fed massive amounts of data (e.g., the entire internet) and "learns" to find patterns. This process is incredibly computationally expensive, requires enormous amounts of power, and is almost always done on large clusters of GPUs in data centers.

- Inference: This is the "real-world" phase. Once the model is trained, it is used to make predictions or generate new content (e.g., answering your question or creating an image). Inference needs to be fast and efficient. This is where ASICs, FPGAs, and NPUs often shine, both in the cloud and on local devices (known as "edge computing").

The Future of AI Hardware

The race for AI dominance is a hardware race. The future of AI chips is focused on solving two major problems:

- Energy Efficiency: Training large models consumes an astronomical amount of electricity. The next generation of chips must do more with less power.

- Neuromorphic Computing: This is a more radical approach. Instead of just performing math quickly, neuromorphic chips are designed to structurally mimic the human brain. They aim to process information in a fundamentally different, more efficient way, using "spikes" of energy, much like our own neurons.

In conclusion, AI chips are the critical infrastructure of the 21st century. They are the engines driving the AI revolution, and the battle for who can build the fastest, most efficient, and most powerful chip will define the next decade of technological progress.

Comments (0)